16 Jan Why Data Structure Matters More Than Analytics Tools

Why integrating data from different systems often leads to different numbers in reports, and how data standardization helps solve this problem.

Across many industries — from finance and retail to telecommunications and government systems — data integration often leads to the same situation: different departments look at the same data but see different numbers.The reason is usually not dashboards or analytics tools. The reason is the structure of the data.

What This Looks Like in Practice

In many organizations, data comes from several operational systems. Each of these systems was created at a different time, by different teams, and for different purposes. As a result, the same information can be stored in different ways.

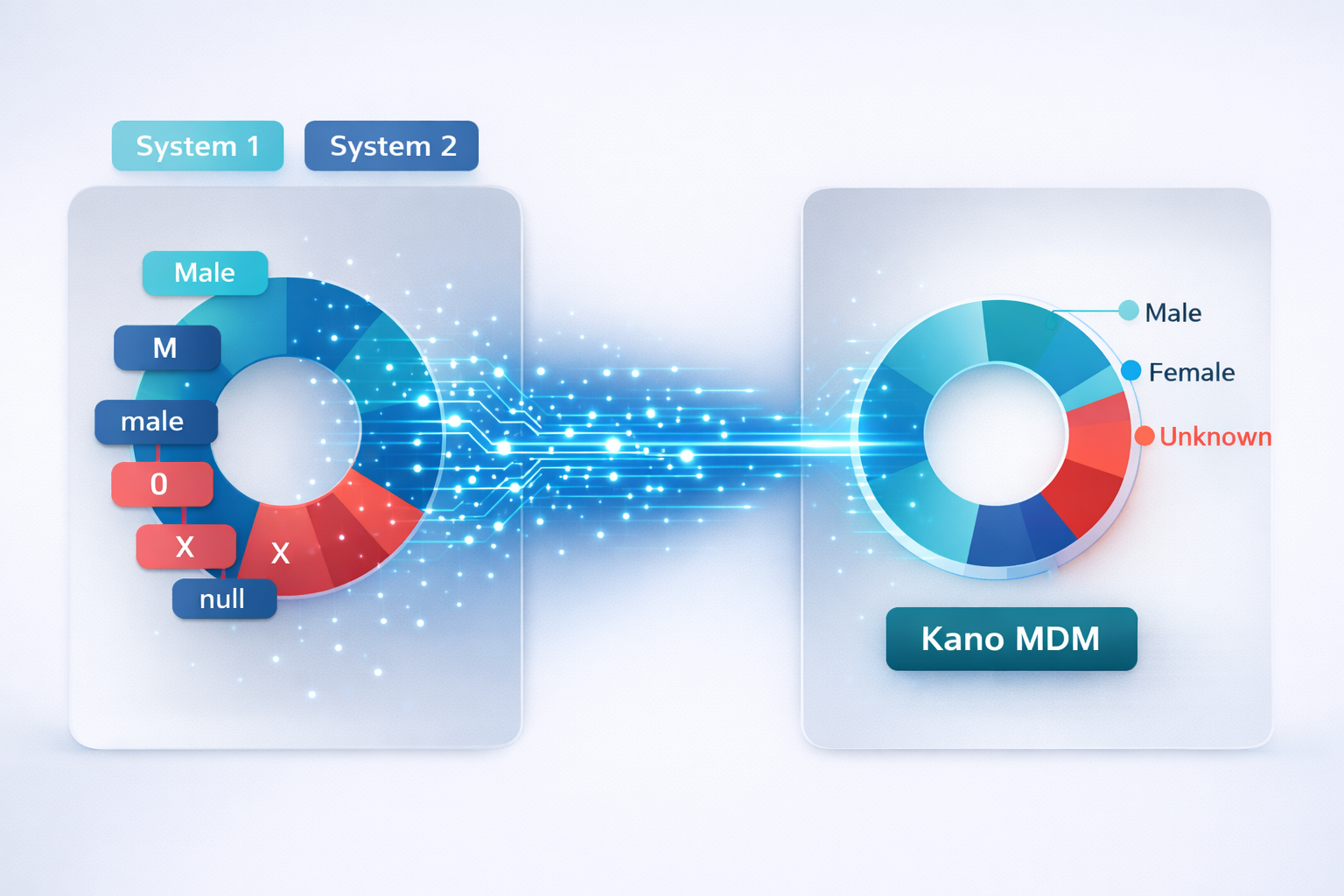

For example, in different systems a basic attribute may be recorded as “M” and “F”, as “Male” and “Female”, in different letter cases, or even as technical values such as “0”, “X”, or “null”. When such data enters an analytics system without prior alignment, it begins to appear as different categories.

As a result, one team may count “Male” and “M” together, while another may count only the value “Male”. Technical values such as “0” or “X” may even be excluded from calculations because analysts simply do not know what they mean.

If all these values enter a unified data environment without normalization, values with the same meaning start to appear as different data categories. Charts begin to show separate indicators for “Male”, “M”, “male”, “0”, “X”, and other variations. This not only distorts the final numbers but also makes dashboards much harder to read.

As a result, managers see overloaded charts with unclear values. For people who make decisions, labels such as “0” or “X” have no obvious meaning, while analytical visualizations should be clear for all users.

Formally, everyone works with the same data sources. However, the final numbers and even the appearance of reports may differ significantly.

Why Data Standardization Is Necessary

A similar situation occurs with other categorical fields — such as statuses, object types, or classifications — where the same category can have dozens of spelling variations, abbreviations, or even typos.

Over time these differences accumulate. In some cases, a single analytical category may correspond to dozens or even hundreds of original values: some stored as codes, some as text descriptions, and some entered by users in free form. As a result, analytics tools begin to interpret data with the same meaning as different categories.

That is why one of the key steps in cross-system analytics projects is data structuring — aligning values, normalizing categories, and creating a unified reference data model. Different value variations are mapped to a limited set of standardized categories and managed reference lists. After such normalization, the data structure begins to reflect the real processes of the organization rather than differences between data sources.

This approach is universal across industries. Whether the data describes customers, products, contracts, services, or other entities, analytics becomes reliable only when the data is defined through a consistent structure and managed classifications.

At the same time, data structure cannot remain static. As organizations evolve, new systems appear, new values are introduced, and new ways of recording information emerge. Therefore, standardization and mapping processes must be continuously maintained.

Supporting Data Structure in the Long Term

In Kano MDM, managing classifications and mappings is not treated as a one-time integration step but as an ongoing managed process. Thanks to a flexible system of roles and access levels, organizations can assign users with extended permissions who administer and maintain these mappings. This allows new data variations to be taken into account in time and keeps the data structure and classifications aligned with real operational processes.

When the data structure is maintained and evolves together with the system, analytics becomes stable. New data sources can be integrated into the existing data structure without rebuilding the entire reporting logic.That is why in any industry strong analytics does not begin with visualization tools.

Its foundation is a well-designed data structure and managed reference data.